When I began this blog, some ten years ago, it was for the express purpose of both promoting my writing and discussing writing in general. Since then, although I have certainly used it for that purpose, promoting my books and zines, posting the occasional poem, writing the occasional review of other books, and posting discussion topics on the subject, the blog has almost inevitably drifted into other waters. Since I enjoy travel so much, I began to post photographs and memories of those travels. I put up pictures of my artwork, since this seemed an obvious (and free!) place to promote them. Articles on the British countryside, mythology and folklore, and customs. Like most people, I have wide interests and this is a good format to record them in.

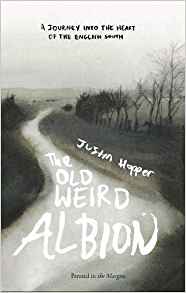

The Old Weird Albion, by Justin Hopper. Reviewed in 2019

One of my great pleasures has been the meeting of minds. We follow each other, read posts and comment, foment discussions. And it is a safe place! Unlike social media, it is very rare for strangers to barge in and attack other users. And on the very rare occasions this happens, it is easy to just block them. This makes it a much more enjoyable place to spend time. And there are no algorithms pushing contentious posts at the reader.

Mount Everest, photographed from Tengboche in Nepal from a post in 2021

But for the last couple of years I have been rather tardy in both posting and reading other’s blogs. Part of the reason for this is that since being retired, for some reason I seem to have less free time than I did before. I’m not really sure why that is. But I’m still here. And to get myself back into the swing of things, as well as writing some new posts, I’ll probably re-post a few of the posts I put up a long while back, which many of my current followers won’t have seen.

Recycling is good, after all.

A piece of my artwork.